LaTeX templates and examples — Journal articles

Discover a wide range of academic journal LaTeX templates for articles and papers which automatically format your manuscripts in the style required for submission to that journal.

Recent

The very basic LaTeX example/template file that the Journal of Computational and Graphical Statistics provides (as of 2015-09-02)

A default template for responding to reviewers. Contains a brief cover letter, then pages for inputting reviewers comments and your responses to them.

Basic template for submissions to the linguistics journal Diachronica (https://benjamins.com/catalog/dia)

Plantilla publicada en la página de la revista de la Facultad de Ciencias Exactas, Físicas y Naturales

How to conceal objects from electromagnetic radiation has been a hot research topic. Radar is an object detection system that uses Radio waves to determine the range , angle, or velocity. A radar transmit radio waves or microwaves that reflect from any object in their path. A receive radar is typically the same system as transmit radar, receives and processes these reflected wave to determine properties of object. Different organizations are working onto hide object from the radar in outer space. Any confidential object can be taken through space without being detected by the enemies. This calls for necessity of devising new method to conceal an object electromagnetically.

![[JME] TEMPLATE JME - Journal of Mechatronics Engineering IFCE](https://writelatex.s3.amazonaws.com/published_ver/9230.jpeg?X-Amz-Expires=14400&X-Amz-Date=20260531T075149Z&X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Credential=AKIAWJBOALPNFPV7PVH5/20260531/us-east-1/s3/aws4_request&X-Amz-SignedHeaders=host&X-Amz-Signature=69952c6e132784923b4942062f473cfadbbb0eca5529d34143cf730c5ece7868)

The Journal of Mechatronics Engineering is a semiannually electronic publication created by the Federal Institute of Ceará - IFCE. The aim of this work is to contribute to the dissemination of knowledge through the publication of scientific papers (unpublished and original articles, reviews and scientific notes) in English language. Through this, work the editorial board of the journal invites researchers, professionals, undergraduate and graduate students to share their experiences with the scientific and academic community through our electronic journal.

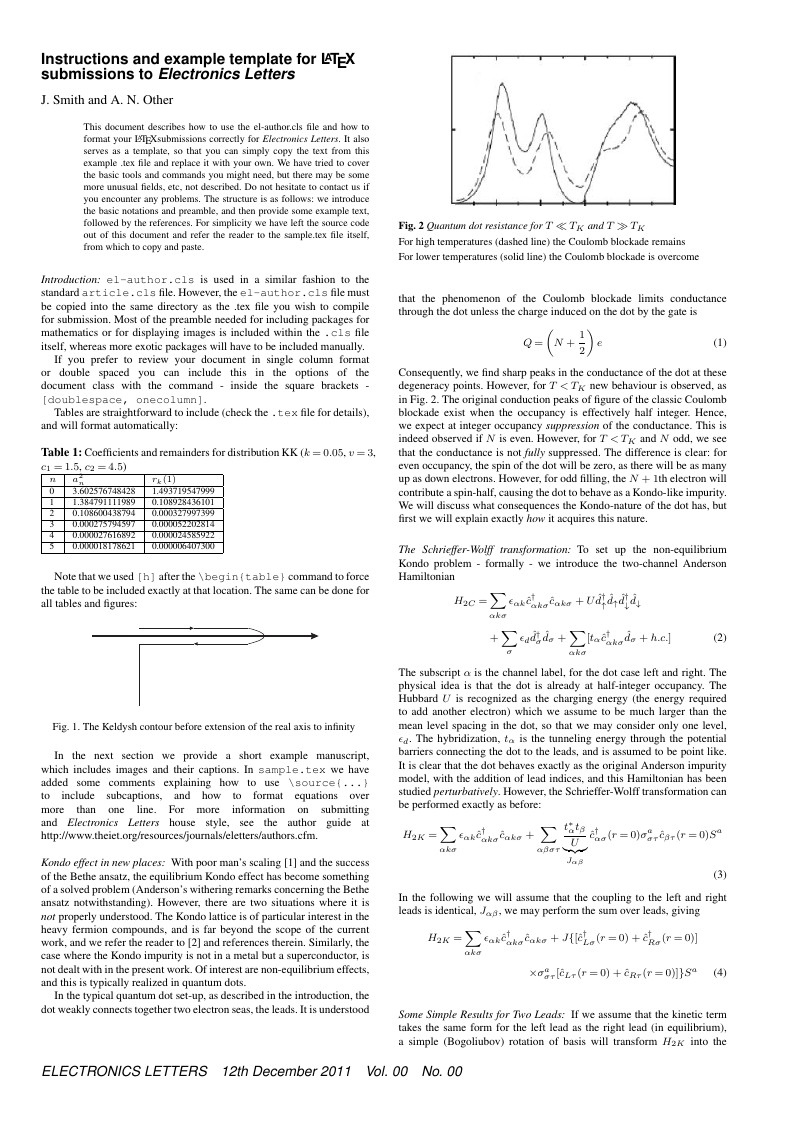

IET Electronics Letters LaTeX template/sample downloaded from Author guide - Electronics Letters.

Modified by Eetu Mäkelä from the [CEURART template](https://github.com/yamadharma/ceurart) whose authors are Dmitry S. Kulyabov, Ilaria Tiddi and Manfred Jeusfeld.

This is the official template for papers to be submitted to Open Geomechanics.

\begin

Discover why over 25 million people worldwide trust Overleaf with their work.