overleaf template galleryLaTeX templates and examples — Recent

Discover LaTeX templates and examples to help with everything from writing a journal article to using a specific LaTeX package.

Annotated Bibliography template for Georgia Tech CS-6460 Assignment 5 (Collecting your sources). Citations are in SIGCHI format. Credit for creating this template goes to Brady Hurlburt, I just exposed it as an Overleaf template. Original files can be found at https://github.gatech.edu/bhurlburt3/bibentry-example

Modelo de Lista de Exercícios para Universidades Criado por Diogo Roberto R. Freitas (diogo@poli.br) Livre para alterações

Segue os padrões de estilo e requisitos bibliográficos descritos no template de artigo para a Revista Conexões Ciência e Tecnologia. (Utlizando como base o modelo LaTeX do site).

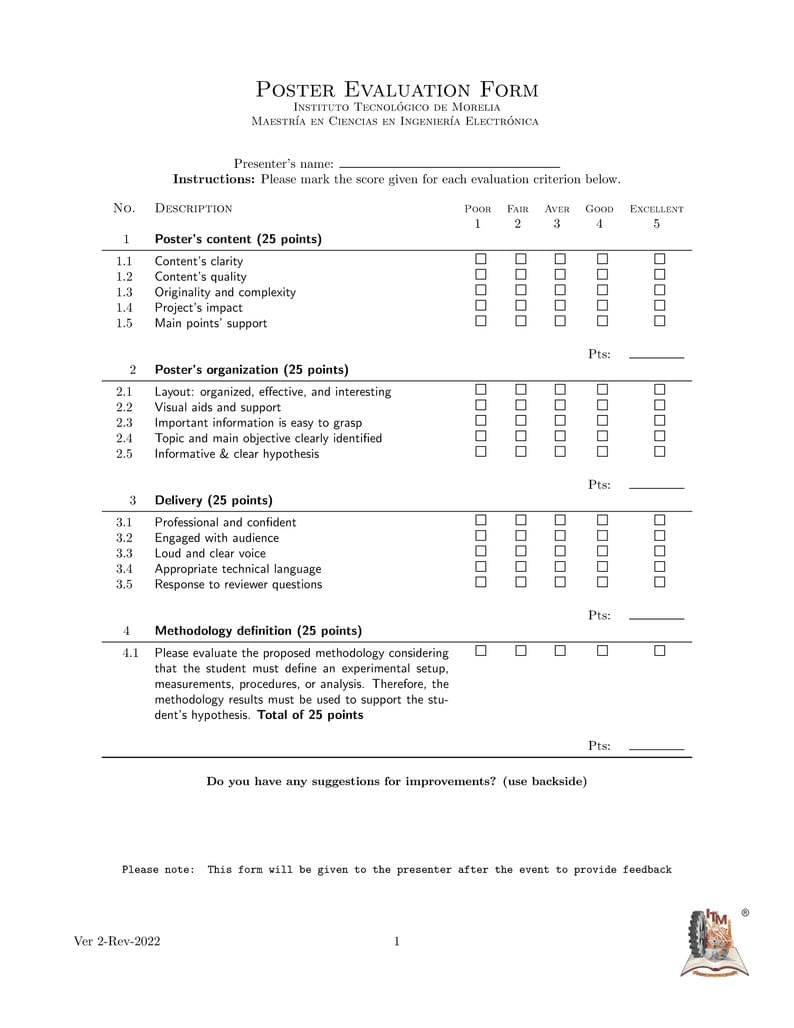

Evaluation form for poster presentation events.

A template for creating course quizzes.

Template of Certificate for academic events. Modelo de Certificado para eventos acadêmicos.

Student at IIIT Allahabad Bio-Informatics

Unofficial template for HKUST reference letters

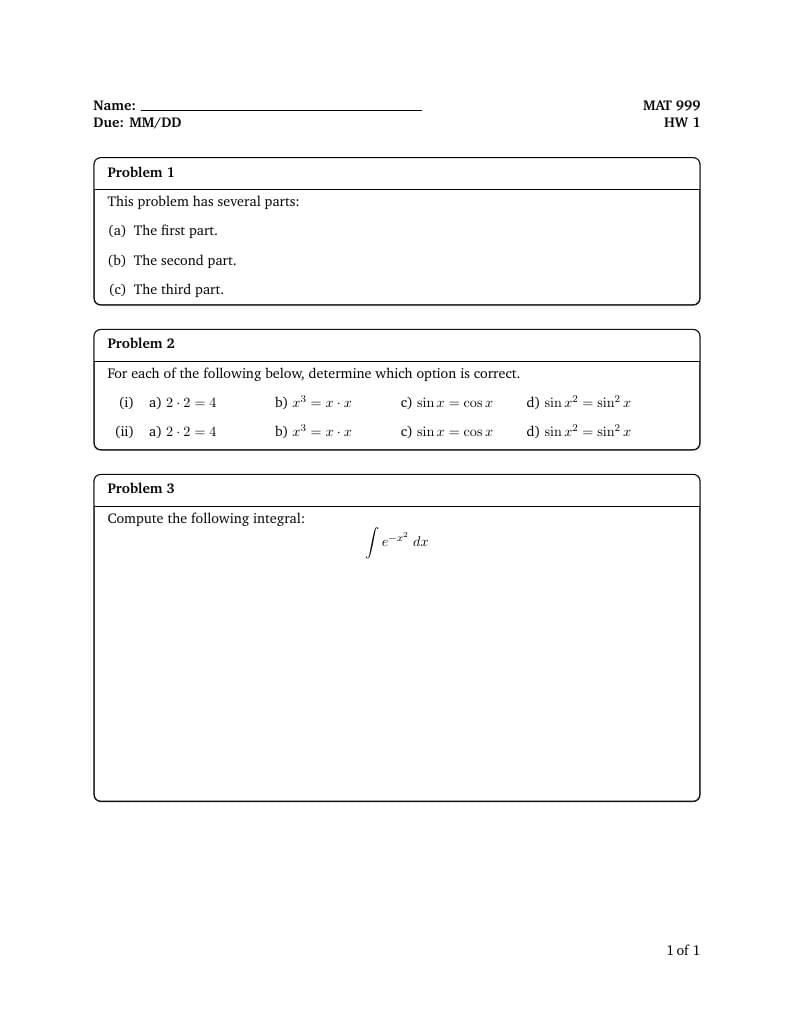

A template for creating course homeworks. The template uses the same style file so as to avoid the need for long preamble. The 'Main Document' should be set to the homeworks you are working on.

\begin

Discover why over 25 million people worldwide trust Overleaf with their work.